diamond data set from UsingR

Data is diamond prices (Singapore dollars) and diamond weight

in carats (standard measure of diamond mass, 0.2 \(g\)). To get the data use library(UsingR); data(diamond)

Brian Caffo, Jeff Leek and Roger Peng

Johns Hopkins Bloomberg School of Public Health

diamond data set from UsingRData is diamond prices (Singapore dollars) and diamond weight

in carats (standard measure of diamond mass, 0.2 \(g\)). To get the data use library(UsingR); data(diamond)

data(diamond)

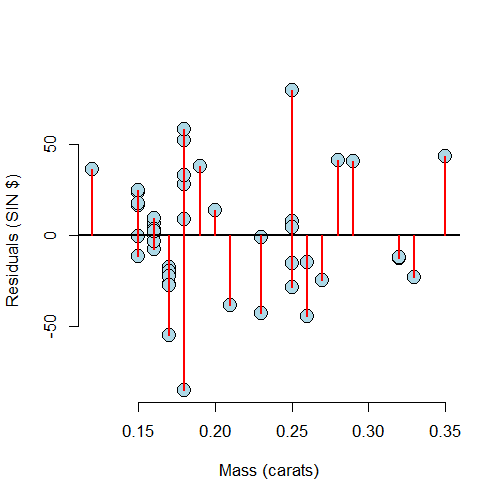

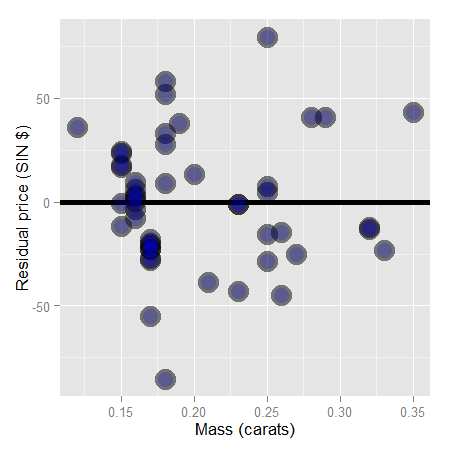

y <- diamond$price; x <- diamond$carat; n <- length(y)

fit <- lm(y ~ x)

e <- resid(fit)

yhat <- predict(fit)

max(abs(e -(y - yhat)))

[1] 9.486e-13

max(abs(e - (y - coef(fit)[1] - coef(fit)[2] * x)))

[1] 9.486e-13

y <- diamond$price; x <- diamond$carat; n <- length(y)

fit <- lm(y ~ x)

summary(fit)$sigma

[1] 31.84

sqrt(sum(resid(fit)^2) / (n - 2))

[1] 31.84

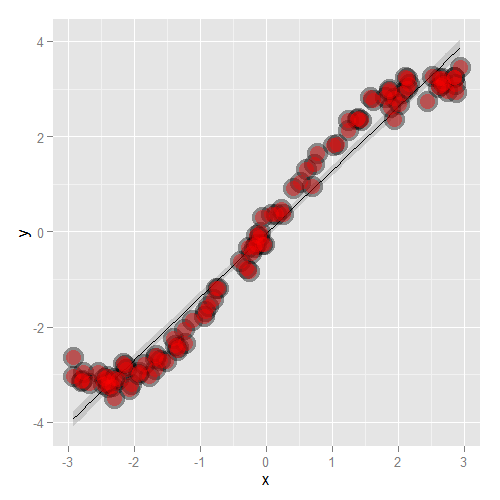

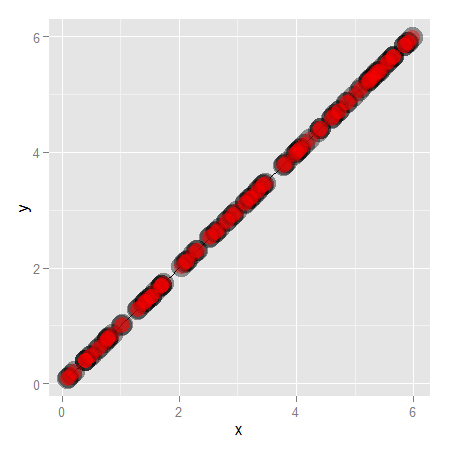

example(anscombe) to see the following data.

data(anscombe);example(anscombe)

\[ \begin{align} \sum_{i=1}^n (Y_i - \bar Y)^2 & = \sum_{i=1}^n (Y_i - \hat Y_i + \hat Y_i - \bar Y)^2 \\ & = \sum_{i=1}^n (Y_i - \hat Y_i)^2 + 2 \sum_{i=1}^n (Y_i - \hat Y_i)(\hat Y_i - \bar Y) + \sum_{i=1}^n (\hat Y_i - \bar Y)^2 \\ \end{align} \]

Recall that \((\hat Y_i - \bar Y) = \hat \beta_1 (X_i - \bar X)\) so that \[ R^2 = \frac{\sum_{i=1}^n (\hat Y_i - \bar Y)^2}{\sum_{i=1}^n (Y_i - \bar Y)^2} = \hat \beta_1^2 \frac{\sum_{i=1}^n(X_i - \bar X)^2}{\sum_{i=1}^n (Y_i - \bar Y)^2} = Cor(Y, X)^2 \] Since, recall, \[ \hat \beta_1 = Cor(Y, X)\frac{Sd(Y)}{Sd(X)} \] So, \(R^2\) is literally \(r\) squared.